I’ve been on the internet a while. It’s my first port of call after a long day of software development in the first-world. I’m not sure why, but it used to feel more like somewhere to belong than it does now. With it being a more niche thing back in the day, it didn’t bring all the rot associated with mainstream appeal and adoption. Someone of my type can still look back fondly, longing for the days when a discussion took place on a myBB forum1 created specifically for discussion of that kind of topic. Those were the days.

In the intervening years, I’ve watched everything novel and unique be swallowed up by big companies who need not compete. Everything that did one thing and did it well, all the contrasting colours of the spectrum, have merged into one hideous grey. All to make a system so convenient and connected that the milliseconds of delay added to my supposedly busy day could mean that I click away.

What I’ve described is important, and tragic. Something noble has died. The spontaneous, creative and quirky have been replaced with the safe, engaging and baiting. There is so much to be said about these things, but today I am writing about one aspect of the postmodern hyperconnected (and lonelier than ever) world that we live in.

Online bots. There are many kinds, and I would argue that it is important to categorise them not just by how sophisticated they appear, but also by what their purpose is. I am going to cover a couple of examples.

We hear a lot about the capabilities of the latest GPT models, but we shouldn’t neglect the simpler ones. Some can be silly and entertaining due to their incoherence. There’s something about a dumb Markov chat bot that hits my receptors like few other things.

But not all unintelligent bots are harmless, even the ones that are glorified copy-pasters. They can have strength in numbers. Ladies and gentlemen, I’d like to introduce you to a nemesis of mine…

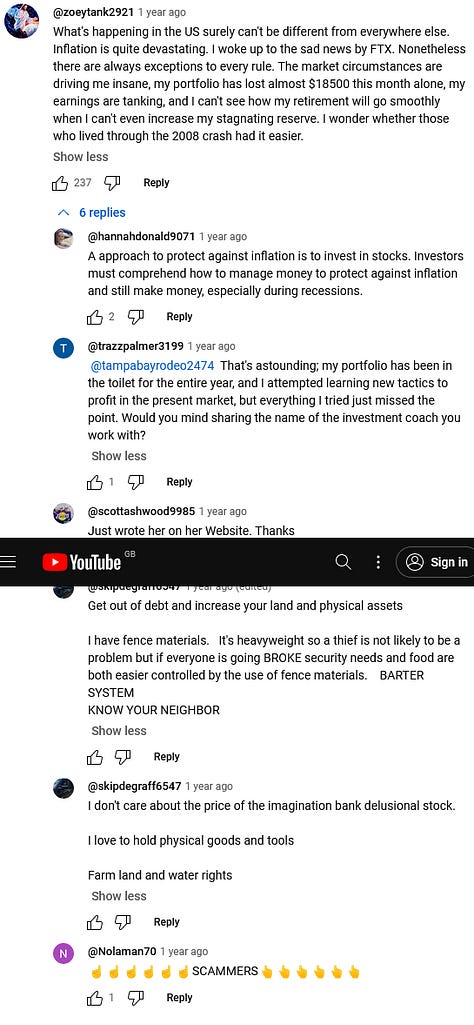

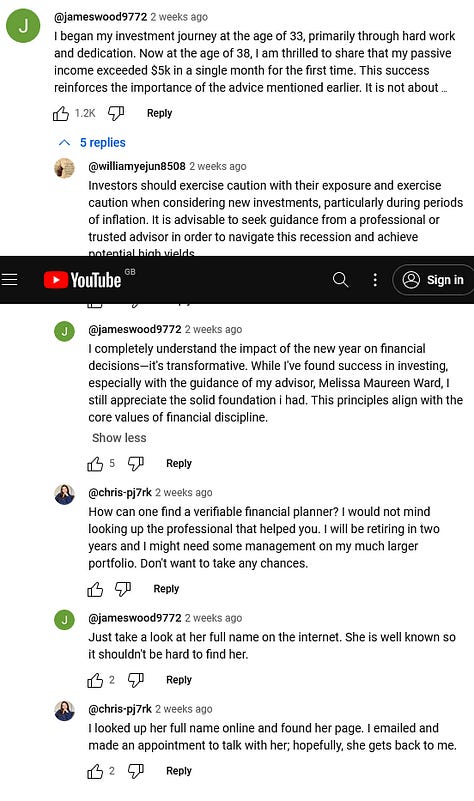

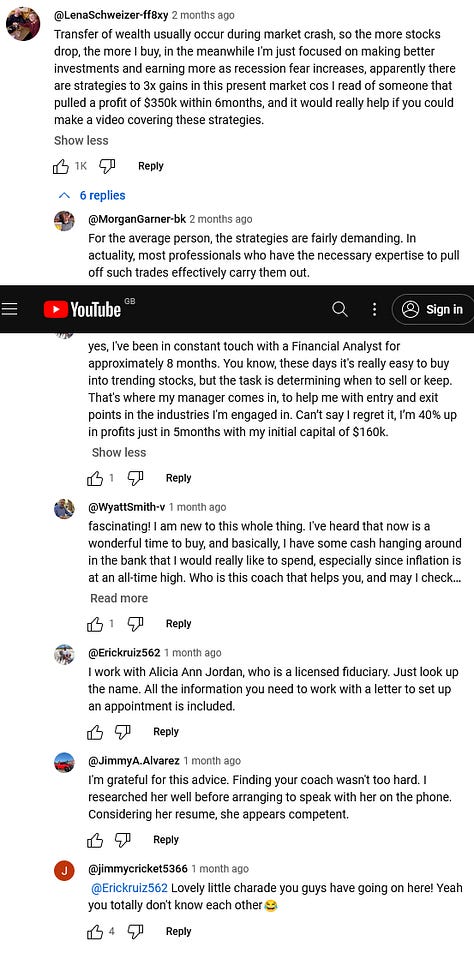

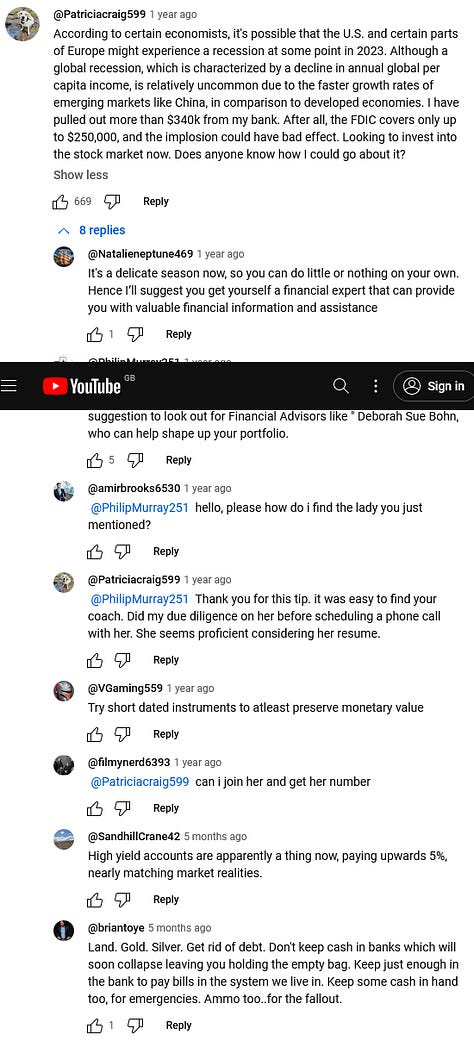

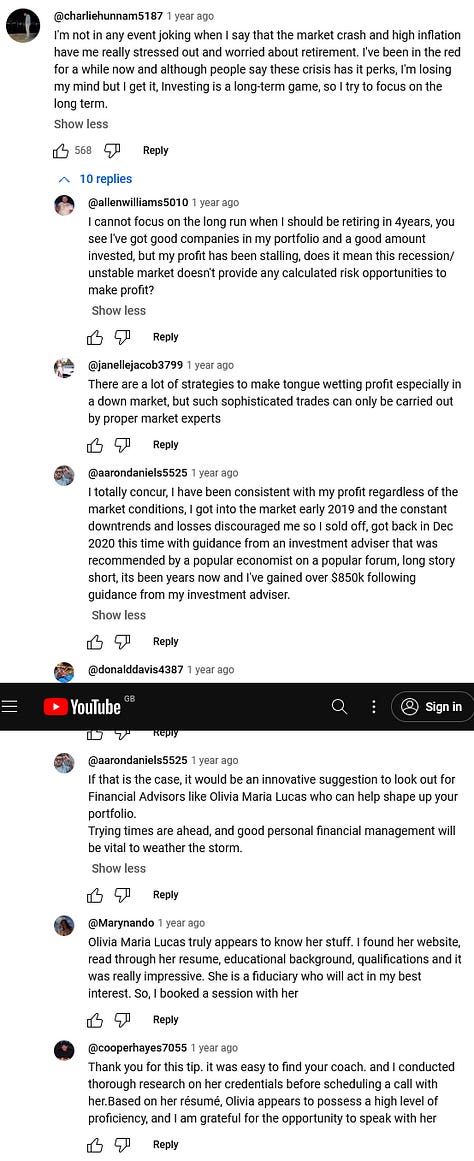

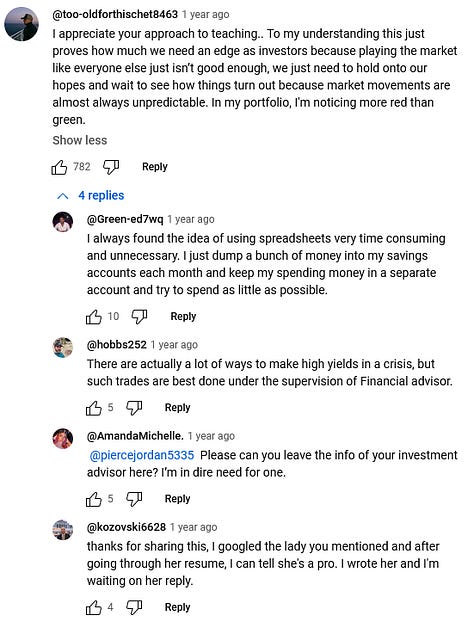

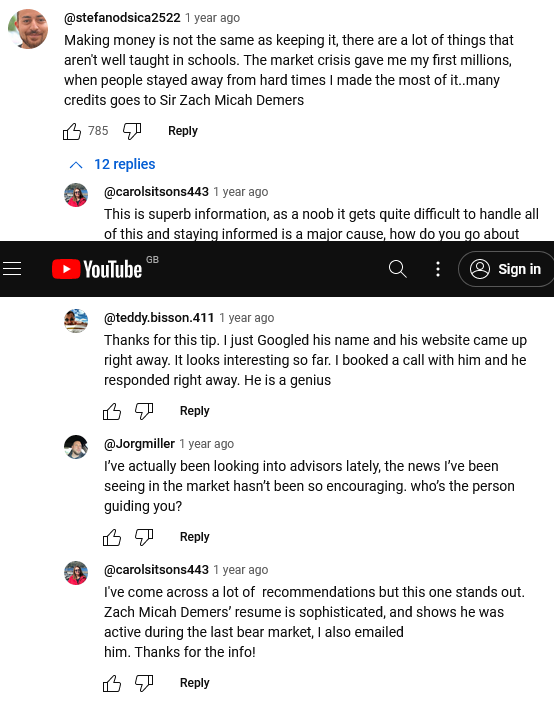

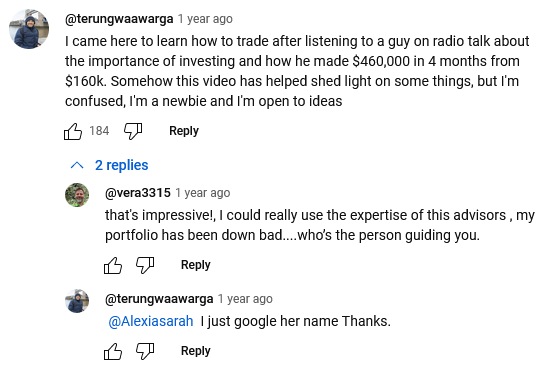

Go to any popular YouTube video which has a financial theme and you will see many examples of influence warfare in the comments. They are a theatrical performance of three characters:

someone in need of advice to achieve some financial goal relevant to the video or the wider economic context;

the name-dropper, who presents the person to fix their problem; and

the corroborators.

These use accounts which appear to be real people. They have photos, if a bit small. Their handles (usernames) seem reasonable. What’s more, the original request for help has a lot of upvotes (usually over a thousand).

Seems legit, right? Not everyone is convinced. If you are one of those who posts “SCAM SCAM SCAM” in a reply to these posts, I see you and I thank you.

One might ask how YouTube allows this. Aren’t they supposed to be using some anti-bot measures to deal with this sort of thing? How do adversaries successfully stage an entire conversation and thousands of upvotes in this way?

This is fake engagement, an appeal to “monkey see, monkey do”. It’s very powerful. Surely it’s not being used in the political space, right? How big is this iceberg?

Anyone who engages with others online needs to be a Zen master, lest they risk finding their mind consumed. There is something about communicating with others whom we don’t have to face that means we can rapidly defenestrate our restraint.

We are egotistical. When we see people agreeing with us, it makes us feel good; we feel validated. If people don’t agree with us, it can ruin our day, or maybe our life. The court of public opinion is given too much legitimacy nowadays.

There is no denying the effectiveness of staging popularity with fake engagement, whether it’s through bot comments or upvotes. (And don’t forget the future hell of entire AI-generated videos.) Anyone who controls people’s minds controls their wills, their beliefs, what they tell others and teach their children… in essence: the captured person, and their generations to follow, all belong to you.

But no worries, we’ve got CAPTCHAs.

The Security Theatre of CAPTCHAs

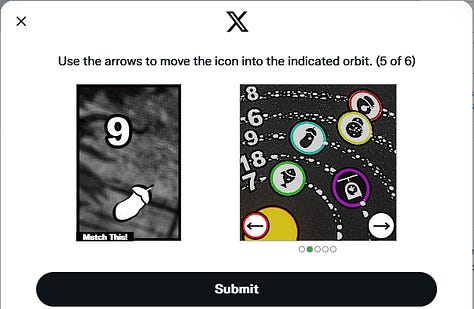

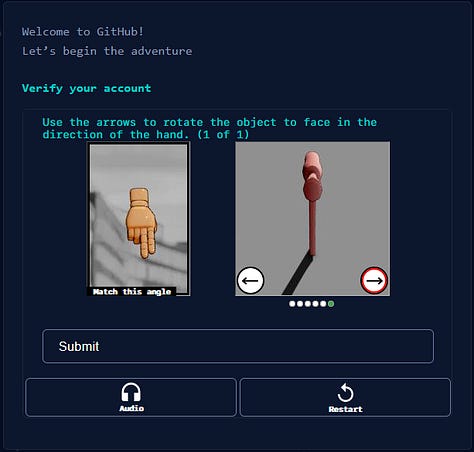

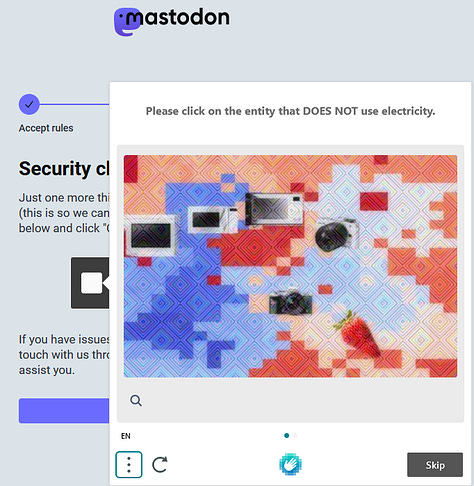

If you’ve signed up for an X account recently (as I have!), or pretty much anything else for that matter, you will have had to complete a rather strange set of puzzles.

The problem is, these are no longer fit for purpose. They don’t ‘work’. Or, whether they work or not depends on your point of view. As a user, you may want to go through these anti-bot measures to feel that, after completing them and engaging fully with the platform, that you are interacting only with other real people. If you are none the wiser, then it works.

However, this is a false assumption today. AIs can solve them. There is a whole new industry of CAPTCHA solving emerging, powered by AIs or humans in so-called “CAPTCHA farms” (yes, that’s a thing; real people can get paid to solve CAPTCHA puzzles).

Many analysts have done a better deep dive on the bot problem than myself, so I’ll leave you links to a couple of videos which cover more authoritative research here and here.

It isn’t my job to reproduce the research of others, and I would try to go into more detail if I could, but I’ve put enough time into this project already to the point that it’s negatively affecting my health, so I hope you’ll forgive me.

So, given the pickle we find ourselves in, I would like to propose a solution.

Online Zombie Apocalypse

There is a temptation to declare the curtain call of humanity. If bots can complete anti-bot challenges, then it’s over. That’s it. I think we had a good run, but now it’s done. We can never again be sure if we are communicating with a real person. The dead internet theory becomes the dead internet.

But let’s not give up all hope yet. Before I present my idea—which is hardly original, by the way—let’s think about how the meatbags can gain an advantage.

The way I see it is that we need to disincentivise bots by making the economics of running them infeasible.

An internet connection is cheap, and making accounts on many big platforms is free. If the only obstacle to bots are the anti-bot methods described above, and these methods don’t work, then it seems trivial for everyone and their mother to be running a bot or two to propagandise the world to vote for their favourite candidate. There may be whole communities participating in a voluntary botnet towards this end. Who knows, and if no one can prove that it is happening one way or another, why assume it’s not?

The only remaining weapons that a platform has to distinguish between man and machine in the present day might be:

statistical methods, i.e. is a user making requests or posting at speeds faster than a human is capable of;

IP address analysis, i.e. are lots of messages originating from the same IP, even if they are from different accounts;

and maybe more nefarious methods by using ads and tracking data to be able to identify bots as belonging to the same device.

These can be effective to some extent, but they still give bots value because a bot which has been deliberately slowed down to human speeds is still more efficient from the adversary’s point of view. Using a bot removes a portion of human labour that would otherwise be necessary.

And when it comes to identifying the locations of users or their devices: on a computer, the platform is probably not being accessed with an app, but with web requests (via a browser), which somewhat limits the platform’s ability to check if the user is running under a virtual machine or perform other tests which might be helpful.

So what else can be done?

Proof-of-Work

It’s possible to put a computer to a task such that the result is, in itself, a proof that substantial computation was performed. In other words: it’s a proof that time and electricity were expended. These proof-of-work (PoW) methods have been around for a long time. One of the first proposed uses was as an anti-spam mechanism for email, but by far it’s most famous usage is in cryptocurrencies such as Bitcoin.

I know that cryptocurrency is a very prejudicial topic in the present day, often associated with scams surrounding the hype. If you find yourself feeling this way, I urge you to keep reading on anyway, as my solution is not a cryptocurrency and shares nothing in common with them except for PoW.

In cryptocurrencies, ‘mining’ is the acquisition of currency. A user who ‘mines’ cryptocurrency shouldn’t just get money for free, because how would that make sense? Money should be worked for. But it’s a computer… what ‘work’ means in the context of computers is quite interesting.

Leaving out much technical detail, a PoW algorithm is nebulously stated here as a problem that the computer finds difficult to solve, but after finding a solution, it can be sent to others who can verify it very easily. It is a literal proof that work has been done; a “proof of work”.

A Proposed Solution

Please welcome: wxPoWer.

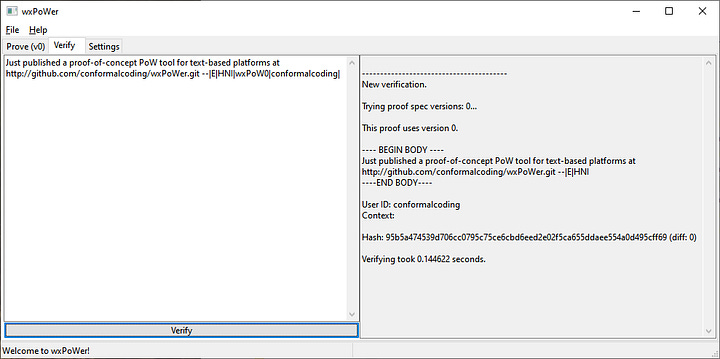

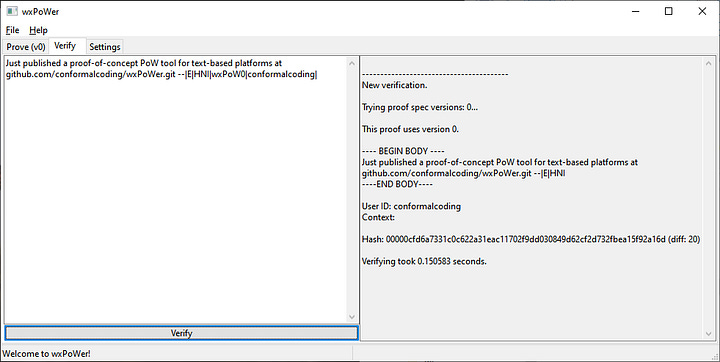

This is a rough proof-of-concept which the users of textual platforms can use to attach a proof (of work) to their posts. You can find this proven post on X here.

There’s a lot to take in from this screenshot. A quick summary:

The message to be ‘proven’ is in the large text box on the left, and in the bottom-right, the proof can be seen. This contains the message plus a bit extra which allows the proof to function as a packaged message and self-proof to send to others.

This is a hash function-based PoW, where the computer produces a fixed-size output (the hash that starts with some zeroes, then random numbers and letters).

Each hash has a difficulty (or ‘diff’), which is defined as the number of leading zeroes2 in its binary representation.

Each trial3 is an attempt to find a high difficulty hash despite their statistically random nature. The software is unable to influence the output beyond making it a different output.

For those of you with some statistical knowledge, that last point will tell you a lot. If each hash is random4, that means that the probability of finding a hash with difficulty 23 is equal to the probability of the hash’s first bit being zero, multiplied by the probability of the hash’s second bit being zero, multiplied by …

So the probability of finding a hash with difficulty 23 is 1 / (2^23), or about 0.0000001192.

This is most unlikely. It is hard to give an exact value of how many trials must be ran to find a hash that has some predetermined difficulty. However, we can calculate a number of attempted hashes at which point we’ve had a 50% chance of finding one. This I call the LD50, crudely borrowing a term from medicine:

So for a difficulty of 23, the LD50 = 4194304. That is, after 4194304 hashes, we’ve had a 50% chance of finding a hash that has difficulty 23.

Notice the vagueness of the language here due to the statistical nature. It is possible to be lucky in finding a difficult hash just as much as it is possible to be unlucky.

Going back to the screenshot, notice that I had performed 5736071 hashes before stopping. Something you don’t see in that screenshot is that I found the difficult hash less than half way into the hashing time; so had I stopped immediately after that I would have been very lucky. But because I continued on to my goal of a diff 25 hash and gave up after a lot more time, I became unlucky. Oh well!

You can verify my proof by copying and pasting the post into the Verify tab:

Verifying in milliseconds that which takes minutes (or longer) to produce, without the need for a centralised authority to trust. This is the essence and power of PoW.

wxPoWer is free and open source, available on GitHub here. I go into the meaning of the fields on the application in the doc folder on there.

There is much discussion to be had about what the minimum difficulty should be, and this is very much a market that will change as processors become more powerful over time. But this article is not the place.

How Does This Help Combat Bots?

Please recall the primary focus of this piece: how to disincentivise bots by attacking the economics of running them.

One thing I left out of the above section, but that you will probably have gleamed, is that calculating proofs is a power-intensive process. It has to be; for what does work represent, if not consumption of a scarce resource? Time and electricity are the currency here, which are both equivalent to money.

You may have heard of Bitcoin being criticised for its astronomical electricity usage across the world. It’s fascinating for me to hear; because while it is of course true, it proves the general point that value comes from scarcity. It is a feature, not a bug.

If you are concerned about a PoW-based communication future, I would suggest that you have a pessimistic attitude, looking at the world as though everything has already been invented. Humanity obviously has myriad challenges in the works, but it doesn’t end here. Where is it written that our future won’t consist of innovative ways to utilise scarce resources, and then migrate to using other resources, or perhaps using entirely new approaches? We will be okay. We need to solve problems one at a time, and problems today require solutions today. If there’s no tomorrow, who cares what the day after is like?

Anyway, bringing us back to today’s problem: requiring a proof of work to make a post, or to ‘like’ something, would mean that large farms of bots suddenly have to perform a degree of computation (the expenditure of time and electricity) that, added up, is now simply too expensive. As for humans, well, we will in general engage with these platforms much less, so not be as affected. More on that later.

What About Server-Side?

wxPoWer is decribed as a drop-in augmentation, meaning that the platform is not aware of it (it is client-side) and can unintentionally mess with it. In short, it is for the user to make it work. And naturally it is error-prone, not to mention inconvenient.

If you make a post on X which contains a URL, it will be shortened in the uploaded post. But interestingly, copying and pasting the post puts the original URL back, but it’s not the exact same URL if you originally omitted the protocol at the start like I did in this proof.

I have lots of ideas as to how this could be implemented on the platform itself which I will mostly leave out, maybe going into them in another piece. The user still proves each action of course, but the platform will verify before allowing public visibility. A kind of zero trust approach would be an ideal tenet, allowing users to see not just other users’s messages but their proofs for one’s independent verification.

However, it will still suffer from the same issues as a client-side approach, which are primarily inconvenience and added communication latency. The latter could be mitigated by users flagging others as trusted, thus being able to access messages before they are proven. Again, I may go into my thoughts on this some other time.

More Food for Thought

I’ve left one bit out of my thinking until now as I think you would have scoffed at it, if it occurred before this point.

Please allow me to explore some philosophy: I think that when we are too free to post whatever we want, instantly, without any cost, we say things of very little value. Put another way: our words are as valuable as the cost paid to make them.

This cost isn’t necessarily money, by the way; and arguably it is rarely so (at least directly). I think about the time I’ve spent writing this article. It’s been months in the making, and even though not every section has had the same care and devotion put into it, it is ultimately a rewrite of a rewrite of the same documents containing the same core ideas, refined and re-refined as best as I can, with heart and soul poured in. Big sacrifices have been made. Time has been spent which could have gone towards other things. So, although there may be many who find this all disagreeable, I feel that I have at least paid for my words here.

That being said, I am not a professional writer and this has not been proofread, so who knows? Maybe it will come across as the amateurish ramblings of a madman.

Imagine, for a moment, if you can, what the world would be like if people had to prove every post, asking themselves if what they’re saying is worth it, if it’s tactful and well thought out, or if it is impulsive and going to bring more pain, et cetera, before spending the time and electricity cost necessary to publish it. Imagine how the quality of online discourse would infinitely improve overnight.

I sincerely believe that a revolution of this kind is necessary. Not just for combatting bots, but for saving us from ourselves. It’s a price worth paying.

… in the current, digitized world, trivial information is accumulating every second, preserved in all its triteness. Never fading, always accessible. Rumors about petty issues, misinterpretations, slander… All this junk data, preserved in an unfiltered state, growing at an alarming rate.

The digital society furthers human flaws and selectively rewards development of convenient half-truths. All rhetoric to avoid conflict and protect each other from hurt. Everyone withdraws into their own small gated community, afraid of a larger forum. They stay inside their little ponds, leaking whatever “truth” suits them into the growing cesspool of society at large. The different cardinal truths neither clash nor mesh. No one is invalidated, but nobody is right.

And this is the way the world ends. Not with a bang, but a whimper.

These words are from a fictional AI written in the year 2001, if you can believe it.

Sadly I cannot prove this entire article with my proof-of-concept solution, but I would like to prove the final thing I have to say:

---- BEGIN PROOF ----

Thank you for reading my article on combatting bots with proof-of-work. I sincerely hope that it at least inspired you to think of your own solutions to this problem. In the meantime, how about we get some sunlight and speak to some people IRL? :) --|I|B0e|wxPoW0|conformalcoding|

----END PROOF----Or at least I hope it was a myBB forum; I may have forgotten what it actually was… (sad).

Cryptocurrencies often use a more flexible and sophisticated definition of difficulty, but for this proof-of-concept, I stuck with this.

On terminology: hash is both a noun and a verb. As a verb, it is synonymous with trial, i.e. performing trials is sometimes called ‘hashing’. But a hash is the result of a trial.

Note also that the value of each bit is statistically pairwise independent.